Today, we're talking about something that isn't really well explained in the Erlang documentation — resource locking.

What is a resource lock?

A resource lock ensures that only the requestor of that lock can access the locked resource at a point in time.

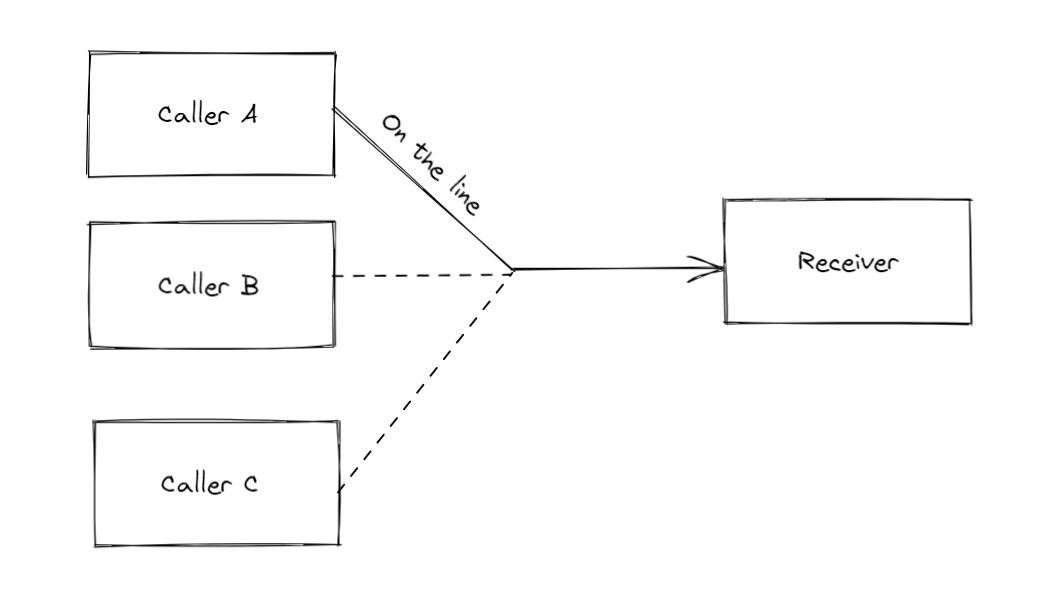

A simplified analogy would be where there are many people trying to call a phone number at a time. The owner of the phone number can only speak to one person at a time, hence the first person to reach through the line would lock the line. All other callers would have to wait for a while and retry their call until the first caller is finished.

In the illustration above, there are 3 callers attempting to contact the Receiver. As the receiver can only take 1 caller at a time, the first caller that makes it through (Caller A) would be the sole entity that can communicate with the Receiver. Caller B and Caller C will need to wait until Caller A is no longer on the line with the receiver.

When applied to the BEAM, callers and receivers are replaced with processes, and a resource lock is the mechanism that dictates which process will be able to access a specified resource (this resource can be anything, and it usually is a GenServer).

When would we want to specify resource locks?

It should be used only when the resource can only handle one caller at a time, and concurrent calls would mess up the process' internals.

For example, operations like writing and manipulating a single file, like updating a configuration file or data file, or performing non-parallelized uploads. Other use cases could include things like throttling interaction with 3rd party services or performing resource-intensive caching.

Setting locks allows us to interact with the resource one-by-one, as other processes that attempt to set the lock will be forced to wait and retry until the resource lock is released.

Caching Use Case

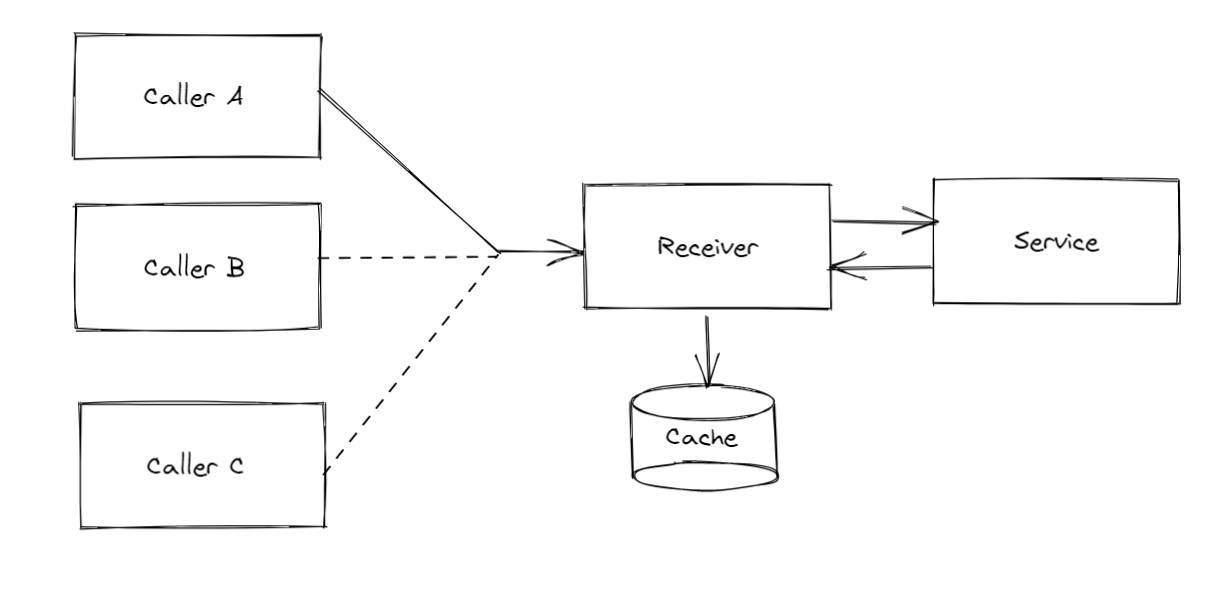

Extending our Caller-Receiver example, lets assume that the Receiver needs to interact with a 3rd party service and performs something very resource intensive that cannot have conflicting updates/interactions. Caller A's interaction can trigger a resource lock on the Receiver, preventing Caller B and Caller C from interacting with the receiver while the interaction with the 3rd party service is ongoing.

After the interaction is complete, Caller B and Caller C can then interact with the Receiver and use the cached data instead triggering the resource-intensive interaction.

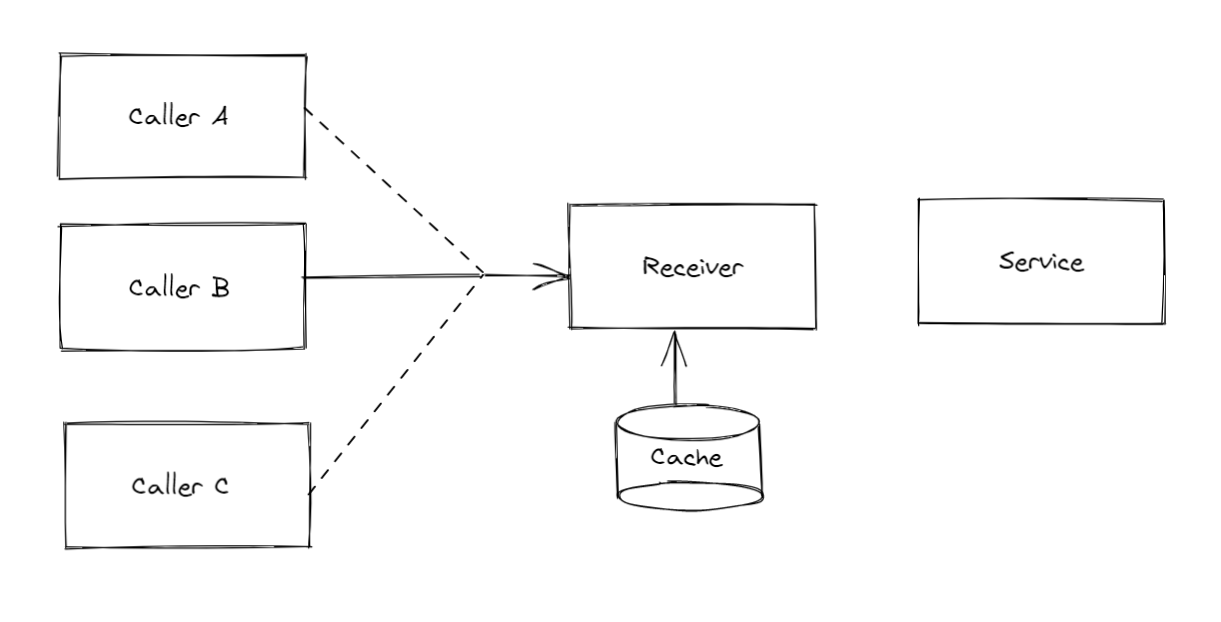

Of course, the above diagram assumes that Caller B and Caller C cannot interact with the Receiver concurrently. Since we don't need to lock the resources when using the cache, a more accurate diagram of the the interaction would be where Caller B and C can interact with the cache concurrently.

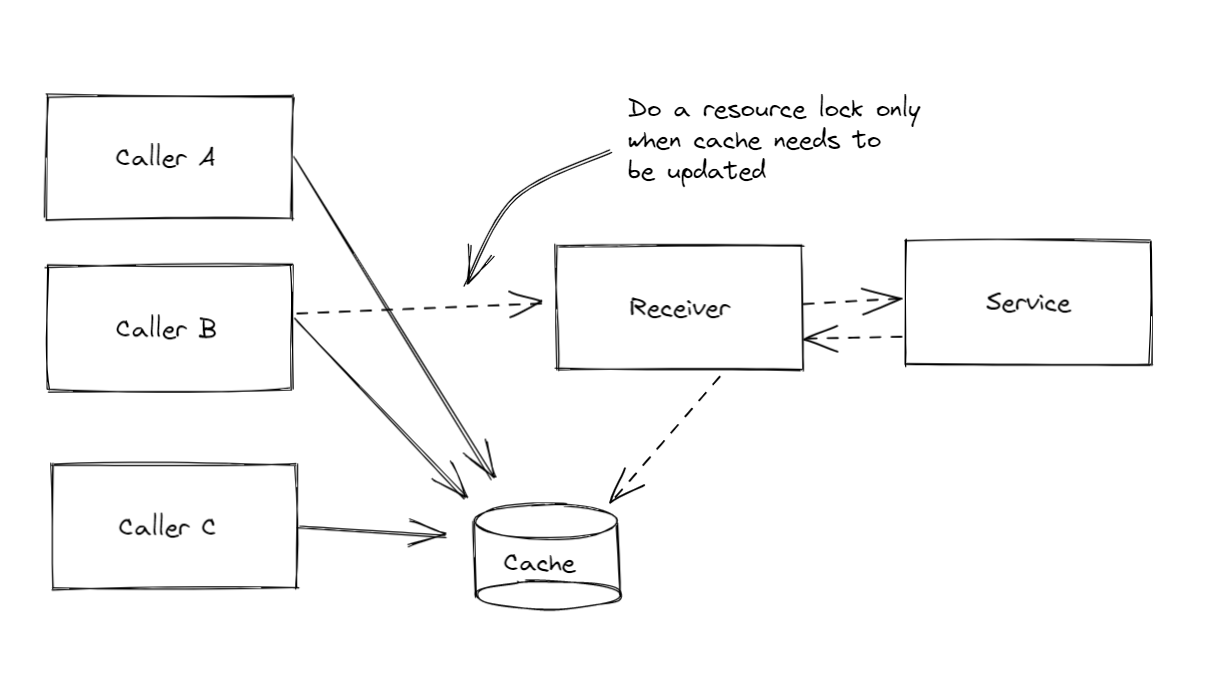

Since :global.set_lock/3 handles the resource lock checking and retrying, it would be more appropriate to shift down the resource locking to when the Receiver is needed to perform the intensive update when the required condition is met. This allows either Caller A,B, or C to perform the intensive update without conflicts.

:global locks

Resource locks can be set at the node level or the global level, and you can pass in the specific nodes that you want to limit the resource locks to. This allows fine grained control over how you can lock a particular process handling I/O.

For instance, if you have one process per node handling a file configuration update, you would not want to specify a resource lock for the Updater process on __all__ nodes, just the nodes that need to be updated.

ResourceId and LockRequesterId

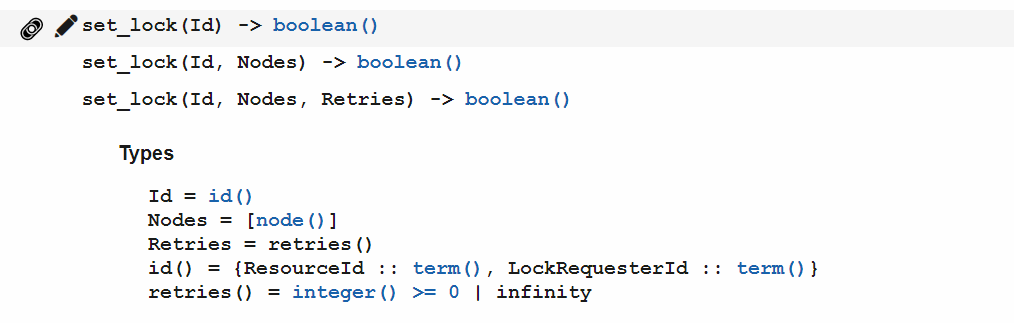

Typespecs can be found at the official docs

One thing that is a little confusing is how the resource lock ID needs to be specified. There are also not much examples out in the wild or on the official docs page, so you can be forgiven if you had much head-scratching trying to get your code working.

The ResourceId can be any erlang term, and would usually be a module or namespace.

The LockRequesterId can be an erlang term that is unique to the requester (in our examples, Caller A could have an id of :caller_a ). It is critical to specify a unique ID so that the correct resource lock can be removed when :global.del_lock/2 is called.

Note that the Id in the typespec is a tuple, so do not be confused and place them as positional arguments.

Examples are always easier to process, so here are a few examples:

# Set the lock globally on Receiver module, using :caller_a as the requester id

:global.set_lock({Receiver, :caller_a})

# returns either true or false

# specify the lock on the currentl node

:global.set_lock({Receiver, :caller_b}, [Node.self()])

# delete the locks that we created

:global.del_lock({Receiver, :caller_a})

:global.del_lock({Receiver, :caller_b})

I personally find it easier to specify the resource ID and the requestor ID separately as variables, then building the id tuple as a variable and passing them in to the functions.

Usage Caveats

It is important to note that using resource locks may result in calling processes "hanging", as they wait until the resource lock is complete before proceeding with code execution. If a resource lock is not terminated correctly, then other processes would keep waiting and waiting, resulting in an infinite loop.

Do GenServers need process locks?

No, they do not. This is because they process messages sequentially and not concurrently.

From the Elixir language docs:

There are two types of requests you can send to a GenServer: calls and casts... Both requests are messages sent to the server, and will be handled in sequence.

From https://elixir-lang.org/getting-started/mix-otp/genserver.html

However, you would need to use resource locks if you have a pool of processes (for example, if you are using poolboy). Specifying a resource lock would help with race conditions and simultaneous conflicting I/O updates that would occur with process pools.