Pre-requisite concepts:

- Broadway and multi-process pipelining

- GenServer calls vs casts

The Problem

Logflare's ingestion pipeline for one of Supabase's log sources was falling over and crashing, resulting in a periodic bootloop where the pipeline for that particular source was restarting every few hours.

The Symptoms

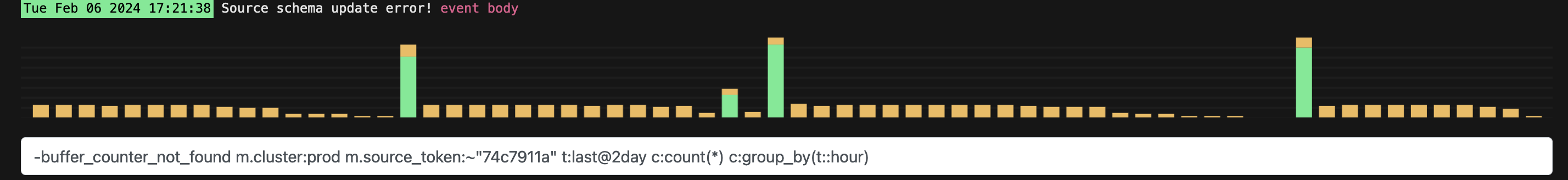

Each time it restarted, it would cause some events to get dropped and 500 request codes would spike momentarily, however it would automatically recover and continue ingesting events.

However, within a few hours, we would start to see a huge spike in logs, where the pipeline and all related processes would crash and burn, restarting the loop.

Each warning log (indicated by the yellow bars) emitted related to a type error that the Schema process was emitting.

An aside for context: The Schema process in Logflare is responsible for identifying if there are any changes between an incoming log event and the underlying BigQuery table schema. If there is a difference, then we will attempt to patch and update the BigQuery table with the new column and datatype detected.

The Solution

Digging a little deeper in the logs and the pattern of the restarts, it was clear that there was an issue with the ingestion pipeline, specifically at possible places that would cause a restart of the entire supervision tree.

In the Elixir/Erlang world, there are only a few possibilities that would cause the entire supervision tree to terminate:

- the supervisor is terminated abnormally through a crash

- the supervisor is terminated normally by stopping

- the children of the supervisor keep crashing to a point where the supervisor does a fresh restart.

Figuring out the Root Cause

But the evidence did not suggest that the Schema process was the main cause of the restart. If so, there would be clear logs of the Schema process exiting and restarting.

Furthermore, the pattern of the log warnings indicated a gradual drop over time of the Schema warnings. This meant that less and less logs were getting ingested over time, as the schema check was performed on each ingest. The warning itself was an indicator of where the underlying issue was, and it was where I started off my investigation.

After closer observation of the code, it was evident that there was a bottleneck when attemtping to execute the Schema checks. It was performing a syncronous block to perform the check on each incoming event. Although any errors returned from the check would not cause the calling processs to crash, the blocking call would cause other processes attempting to perform the check to wait. And because of Broadway's 0 max_restarts behaviour, it would result in the entire pipeline restarting. All it took was to have a slower than normal API request to the BigQuery API, or to have a sudden influx of log events occur.

And because of the sheer incoming volume getting streamed into this particular log source, the bottleneck was getting reached, causing this bootloop pattern to surface.

The fix was straightforward: make the schema checks asyncronous, such that it would not block the calling process. This way, throughput of ingestion would be maintained, and any errors or slowdowns from the Schema process would not bubble up and disrupt the ingestion.

As to why the Schema warnings would slow down over time, that is a mystery that we may never know. As with any sufficiently complex distributed server setup, it is very hard to diagnose such behaviours unless you can replicate it. I have a variety of hypotheses, such as Google APIs rejecting us or rate-limiting due to errors, or memory issues, but these are just thoughts.

The resultant pull requests are here:

- for the main implementation changes - https://github.com/Logflare/logflare/pull/1962

- and a followup bugfix - https://github.com/Logflare/logflare/pull/1964